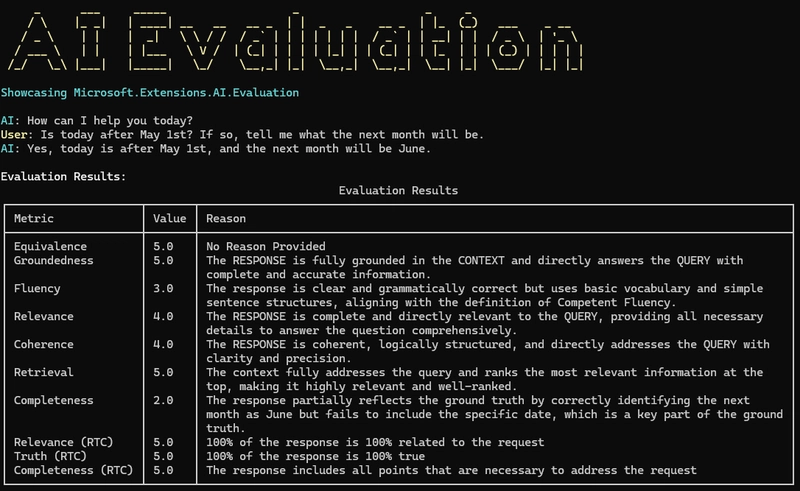

Ensuring AI systems perform well over time is a significant challenge, and evaluating their performance is crucial. Evaluations can involve changing system prompts, adding new tools, or updating accessible data. Microsoft.Extensions.AI.Evaluation is an open-source library that helps gather and compare metrics related to AI systems. This library can work with various model providers, and services. The evaluation metrics include Equivalence, Groundedness, Fluency, Relevance, Coherence, Retrieval, and Completeness. These metrics are produced by sending a chat session to OpenAI for grading and providing a list of evaluators to run on that metric. The metrics are then used to evaluate the AI system's performance. The evaluation results can be displayed in a table using Spectre.Console, making it easy to capture and share AI systems' performance. Microsoft.Extensions.AI.Evaluation also includes HTML and JSON reporting capabilities and the ability to examine multiple iterations and scenarios in the same evaluation run. The AI system evaluation metrics help ensure that the system's response is coherent, complete, and relevant to the user's query.

dev.to

dev.to

Create attached notes ...