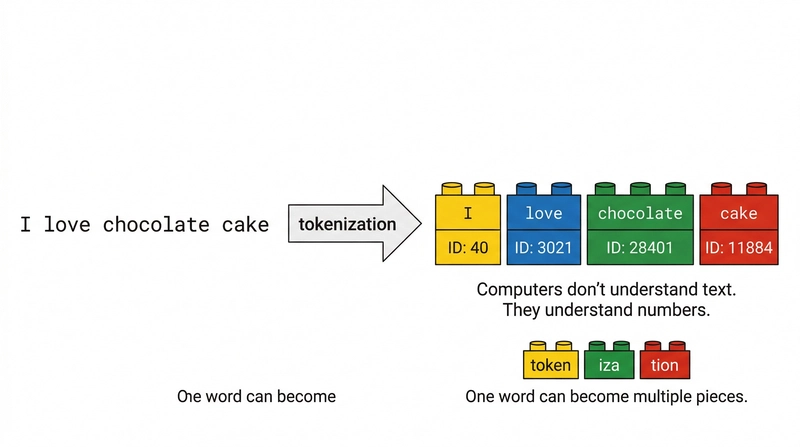

The article explains how large language models (LLMs) function, likening them to LEGO factories. LLMs process text by converting words into tokens, which are units of data the model understands. The context window, the model's working space, has a limited size, affecting how much information it can consider. The process of text generation involves the model predicting the next token based on probabilities, created by the attention mechanism. This mechanism weights the importance of each token in relation to others, helping disambiguate meanings. The model selects tokens based on probability distributions, influenced by temperature and top-p settings which control randomness. Portuguese text incurs a "tokenization premium" requiring more tokens than English, increasing costs and reducing effective context window size. The size of the context window is a key factor affecting the performance of the model. The cost of generating text is higher than the cost of inputting it, due to the mechanisms of model operation. Proper structuring of prompts, known as prompt engineering, helps guide the attention mechanism and improve results. The article emphasizes how understanding these mechanisms is crucial for utilizing and optimizing LLMs effectively.

dev.to

dev.to