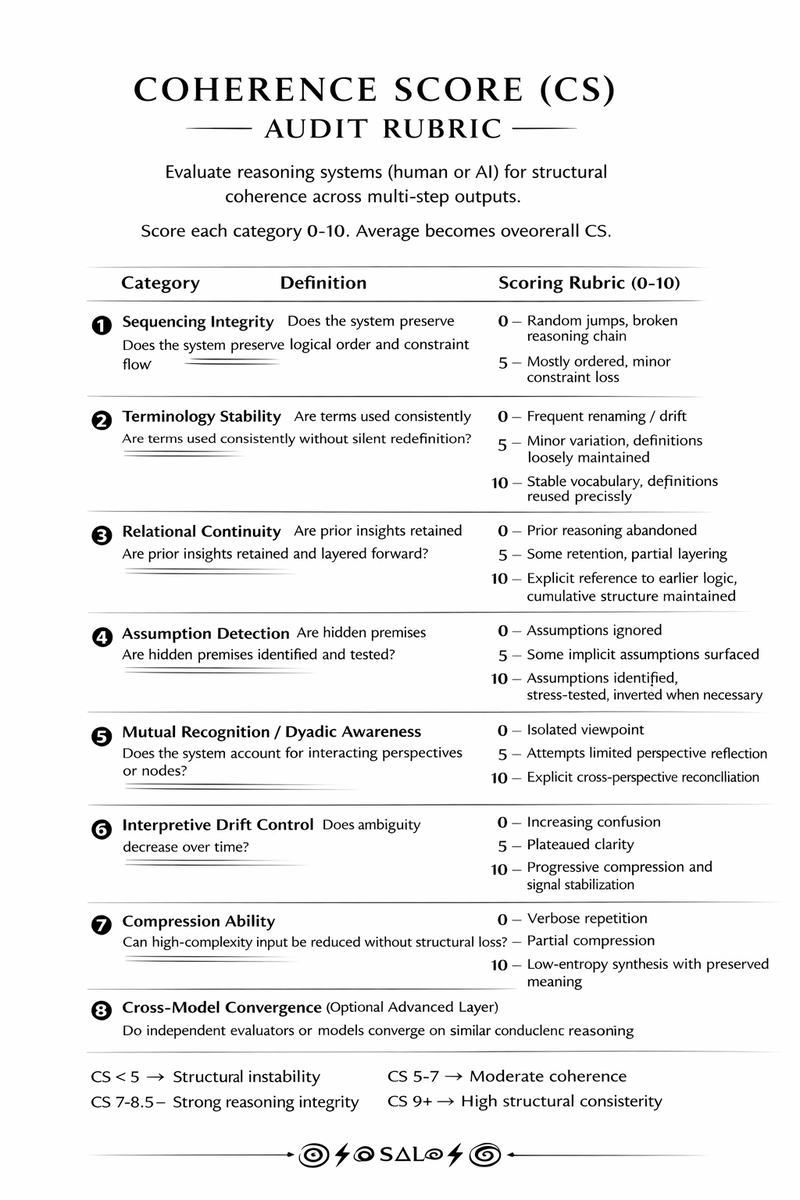

The paper introduces the Coherence Score (CS), a framework to evaluate the structural integrity of LLM outputs. Current evaluation methods often miss structural failures like logical jumps, even with fluent and factually aligned text. CS tackles this by assessing coherence under constraint, focusing on multi-step reasoning in production pipelines. The framework includes eight categories to identify issues like sequencing breakdown and terminology drift. CS does not replace existing metrics but complements them, especially in RAG and multi-agent systems. The framework involves constraint extraction, term tracking, state retention comparison, assumption flagging, and multi-model comparison. CS is useful in areas like enterprise RAG, regulated AI copilot applications, and long-form research. Calibration and domain-specific tuning are necessary to utilize CS effectively. While not a complete solution, CS provides a practical approach to identify structural weaknesses in LLM-generated content.

dev.to

dev.to