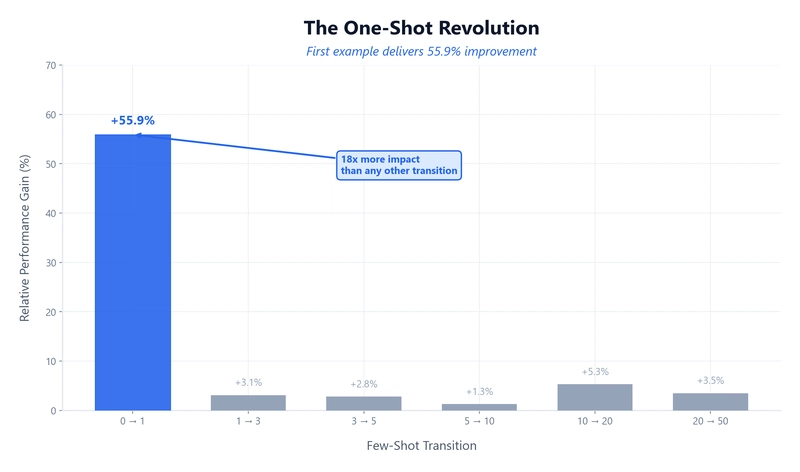

The research investigates the impact of few-shot learning with large language models, specifically using GPT-4.1-nano across various datasets. The study reveals a significant performance boost (+55.9%) from the first example provided, acting as a crucial learning tool for the model. Subsequent examples yield diminishing returns, with minimal improvements after the initial few-shot examples. This pattern of diminishing returns is consistent across diverse tasks like classification and extraction. Statistical analysis, including Welch's ANOVA, confirms the significance of these findings across all tested datasets. Multi-class tasks, particularly those with over ten classes, show a continued performance increase with more examples. The study also analyzes the Return on Investment (ROI) of different few-shot strategies, showing that using a single example offers the best ROI. The research recommends using 3-5 examples for simple tasks and scaling up only for those with many classes. The study emphasizes the importance of carefully crafting the first example and conducting thorough benchmarking. The methodology includes Monte Carlo cross-validation. The project underlines practical implications of these findings for designing GenAI pipelines. Finally, the next part will explore how classical ML still outperforms GenAI in regression tasks.

dev.to

dev.to

Create attached notes ...