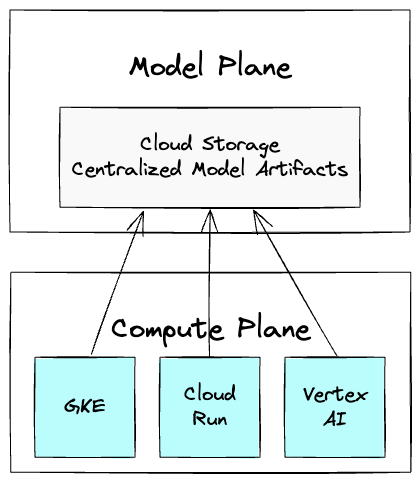

Managing large model artifacts is a significant challenge in MLOps, often leading to slow deployments. Decoupling models from code by hosting them in Cloud Storage offers a more agile solution. Centralizing models in Cloud Storage treats them as a first-class asset with its own lifecycle, separate from compute. This creates a distinct model plane for governance and a compute plane for inference. This separation allows a single model version to be used across GKE, Cloud Run, and Vertex AI without duplication. Best practices for organization include clear naming conventions and environment-specific prefixes in Cloud Storage. Access control via IAM is crucial for security and managing different user permissions. Quantization reduces model size and speeds up inference by lowering precision. Cache warming can improve initial prompt processing time by pre-computing common requests. The Cloud Storage FUSE CSI driver is a recommended method for mounting Cloud Storage buckets directly into GKE pods, enabling near-instantaneous startup. For extreme performance needs, Google Cloud Managed Lustre or Hyperdisk ML offer specialized parallel file and block storage solutions.

cloud.google.com

cloud.google.com

Create attached notes ...